Reasons for Curtailing Our – and Our Childrens' – Screentime [part 2/2]

But if we "can't" cut back on our screentime now, then at the very least we ought to be using those screens to prepare for the time when they'll be overwhelmingly taken away from us

Continuing on from the previous post in which the deleterious effects that screens can have on children was ever-so-briefly touched upon, the heavy-handed approach of a government – like China's – utilising privacy-encroaching methods to impose limitations on the usage of said technologies would quite justifiably be disagreeable to most people in more democratic countries. So rather than following the example of China's government, perhaps a suggestion made by the long-departed Chinese philosopher Confucius (who may actually have been authoritarian) may be more palatable. As Confucius stated in The Great Digest,

...wanting good government in their states, they first established order in their own families; wanting order in the home, they first disciplined themselves...1

Although I'd yet to read that quote, it was an approach similar to this that tipped the scales for yours truly and sealed the deal for my all-encompassing abandonment of watching film and television fifteen or so years ago. Having already elaborated in an earlier post about my three primary reasons for ditching my (careerist) aspirations for the vocation of a filmmaker (climate change, centralisation of power, and wannabe-narcissists), it was however an entirely different kind of reasoning that made me quit watching the stuff.

Fresh off quitting filmmaking, various ideas popped into my head when thinking ahead (as a 27-year-old) to how I might go about limiting the ability of any possible future children of mine to having their eyeballs sold by television stations to well-paid flunkies (aka advertisers), one of those being keeping the sole TV set locked away in a cabinet of which was to be opened up solely at specific times. But while I figured it likely that I might end up watching television at some late hour after said possible children had gone off to bed, I was well aware that it would only be a matter of time before a bout of late-night watching of mine was discovered by any possible little ones and that in their eyes I would be seen as a hypocritical fraud. It was that thought (along with the thought of not wanting to turn into a bitter – and possibly crazy – old man yelling "I could have made better!" at the screen while watching movies in theatres) that finally resulted in me saying "ah f**k it" to myself in regards to watching any television (or film) ever again, soon thereafter followed by me giving away my prized DVD collection. All of which means that it was in large part children that I never even ended up having that instigated me to quit watching film, television, YouTube, etc. (and which also means I've never had the privilege of watching any of those streaming, modern-day cultural institutions like Netflix or Pornhub either).

For various reasons my all-encompassing departure from the quasi-voyeuristic world of watching film and television can be seen as a good thing, sometimes even scientifically so. For starters, most studies on the effects of watching television tend to focus on children (the studies generally finding poorer reading recognition, poorer reading comprehension, poorer math skills, as well as cognitive, language and motor developmental delays), and when they do look at older age groups they tend to study those that partake in "excessive" levels of consumption (greater than six hours a day).

In one exception to the norm, a team of American researchers led by Ryan J. Dougherty (of Johns Hopkins Bloomberg School of Public Health in Baltimore) undertook a 20-year experiment that consisted of 599 participants between the ages of 30 and 50-years-old. After having performed brain scans on all participants, follow-up scans were performed 20 years later, results which were correlated with the average amount of television each individual had watched over the years (deduced from interviews performed at five-year intervals).

With the participants having watched an average of two and a half of hours of television per day, the researchers concluded that the more television an individual watched the more their frontal cortex and entorhinal cortex shrunk. Each extra hour of television watched per day led to an extra 0.5% decrease in grey matter, a substrate responsible for an array of bodily and mental functions and whose reduction can contribute to greater cognitive impairment (such as dementia). But as the researchers also noted, participation in stimulating activities that are associated with maintained cognition – like board games, reading, and yes, even video games – reduced the likelihood of dementia.

In another study on another older age bracket, preliminary data, deduced from 3,662 adults over 50-years-old who watched 3.5 hours or more of television per day and who were observed over a six-year period, suggested not only cognitive decline for those who imbibed but also poorer verbal memory.

The reasons for the deleterious effects routinely seen in any study looking at the fallout from watching television are of course wide and varied, one of these being the unique set of circumstances in which rapidly-changing, fragmentary, dense stimuli is coupled with the (inherent) passivity of the viewer. That is, the analytical portion of the brain is essentially turned off while information is uncritically absorbed, bypassing reasoning and analytical processes. Meanwhile, the excessive stimulation generated, which has no outlet, leads to hyperactivity, irritability, frustration, emotional isolation, and more – and particularly amongst the young. (To make matters worse, television commercials accentuate the fragmentary visual structure, often heightening the pace in order to capture a viewer's attention and take advantage of their induced vulnerability to, as explained below, the power of suggestion.)

With all of that being circumspect enough (via a briefness which does no justice to the matter), an underlying factor enabling the aforementioned is the way in which television enables people's minds to be more alert yet less focused. The fact of the matter is that the practice of inserting subliminal messages into television images is rather redundant, seeing how the brain already exists in a receptive state conducive to absorbing suggestion. Although the effects don't apply equally to everyone, this state of vulnerability to manipulation is thanks to the fact that within a minute of watching television the brain switches from beta waves – which are associated with logical, active thought – to primarily alpha waves, which are associated with rest and relaxation. This was first discovered in 1969 by the researcher Herbert Krugman, as described by Joyce Nelson in her book The Perfect Machine: Television and the Bomb.

In November 1969, a researcher named Herbert Krugman, who later became manager of public-opinion research at General Electric headquarters in Connecticut, decided to try to discover what goes on physiologically in the brain of a person watching TV. He elicited the co-operation of a twenty-two-year-old secretary and taped a single electrode to the back of her head. The wire from this electrode connected to a Grass Model 7 Polygraph, which in turn interfaced with a Honeywell 7600 computer and a CAT 400B computer.

Flicking on the TV, Krugman began monitoring the brain-waves of the subject. What he found through repeated trials was that within about thirty seconds, the brain-waves switched from predominantly beta waves, indicating alert and conscious attention, to predominantly alpha waves, indicating an unfocused, receptive lack of attention: the state of aimless fantasy and daydreaming below the threshold of consciousness. When Krugman's subject turned to reading through a magazine, beta waves reappeared, indicating that conscious and alert attentiveness had replaced the daydreaming state.

What surprised Krugman, who had set out to test some McLuhanesque hypotheses about the nature of TV-viewing, was how rapidly the alpha-state emerged. Further research revealed that the brain's left hemisphere, which processes information logically and analytically, tunes out while the person is watching TV. This tuning-out allows the right hemisphere of the brain, which processes information emotionally and noncritically, to function unimpeded. “It appears,” wrote Krugman in a report of his findings, “that the mode of response to television is more or less constant and very different from the response to print. That is, the basic electrical response of the brain is clearly to the medium and not to content difference.... [Television is] a communication medium that effortlessly transmits huge quantities of information not thought about at the time of exposure.”2

It shouldn't need to be noted that alpha waves are by no means a bad thing, particularly when they're achieved via meditation practices which can promote relaxation and insight. It is however when they're induced by television that they can promote unfocussed daydreaming, an inability to concentrate, and by extension suggestiveness.

But it's not simply brainwaves that we should be worried about but also endorphins. Likewise, it's not just television we should be worried about but also what some call "junk tech" (essentially the vast majority of social media). As stated in a Guardian opinion piece,

The parallels between junk tech and the fast food industry are legion. Both target the most vulnerable in society: our children. Both manipulate – one with happy meals, creepy mascots and bright colours, the other with refined techniques such as infinite scrolling, disappearing messages and autoplay features. And both industries are swimming in cash, with fast food estimated to be worth $648bn, and the tech industry predicted to reach $5.2tn by the end of 2020.

Again, leading by example by quitting various social media platforms (like Facebook) can be easy enough, sometimes even accomplished accidentally. Personally speaking, I only opened up a Facebook account when the platform this blog uses, Ghost, made it possible for posts to be automatically shared to one's Facebook account, I thinking that any Facebook followers I amassed (which unsurprisingly didn't quite materialise) would get my posts fed into their feed. But as I didn't want to actually use Facebook at all, I eventually got the idea to make that notion explicit by putting together and uploading the following image as my account's banner image.

Well, it may have been pure coincidence, but just days after applying that image to my account Facebook cut off my access, claiming they didn't believe I was who I stated I was. Moreover, all the hoops I jumped through in order to prove who I was so as to regain access to my account proved fruitless. Good riddance, I suppose, and there was certainly no feelings of regret from my end.

But whereas leading by example by completely quitting the watching of film and television as well as ditching things like Facebook can be relatively easy enough, the fact that children can now carry a high-quality screen in their pockets kind of lodges a spanner in all that. Because unless one's "loaded" and can afford full-time nannies and tutors and cooks and the rest of it (or home-schools their children or lives in a multi-generational household), then precluding one's children from being exposed to and raised on "junk tech" can be a crapshoot.

Moreover, not only does being loaded afford one the ability to have a close eye kept on their children, but those who are not only loaded but who also lead the companies that target and get children hooked on screen-based technologies actually limit the amount of access their children have to the very technologies they make and promote:

- Steve Jobs prohibited his children from using an iPad: "We limit how much technology our kids use at home."

- Bill Gates and his wife restricted their daughter to 45 minutes of video games during weekdays and an hour a day on weekends (she was otherwise allowed to use the computer for homework)

- Google CEO Sundar Pichai's 11-year-old son didn't own a mobile phone and had his time for watching television limited

- Snapchat CEO Evan Spiegel and his wife limited their children to one and a half hours of screen time per week

But of course enacting such restrictions can be easy enough for a fortunate few, but not so much when you're, say, a parent who has no choice but to work multiple jobs. It's for reasons like the latter that Henrietta Bowden Jones, operator of the NHS National Centre for Gaming Disorders, has stated that

The solutions need to be built into the tech. We are moving to a place where it is no longer acceptable to blame the individual, especially if they are vulnerable, for tech or games addiction.

Which, in effect, somewhat brings us back to the heavy-handed methodology of China's government, albeit in a manner found wanting. Sure, things like Apple's Screen Time functionality can be useful to parents wanting to restrict things like their childrens' iPhone usage, until, that is, enterprising children manage to circumvent the device's restrictions in a myriad of ways. In effect, unless one's family has things like half-broken televisions whose remote controls can then be hidden, restricting access to devices via technology built into the devices themselves largely appears to be a bit of a cat and mouse game.

So besides residing in China, home-schooling with uber-vigilance, being mega-rich, or just succumbing to antinatalism, the chances of raising a child in our modern world while successfully enabling them to largely dodge the mind-wasting pitfalls of television, social media, etc., can be pretty slim.

To offer a bit of a consolation, not only do reading and writing (and thus taking children to libraries from a young age!) require higher brain wave states which can keep one's brain focused and one's attention strong, but the study that examined the 3,662 adults also found that the Internet can have similar effects. As the study noted, "other sedentary activities such as using the internet are not associated with cognitive decline and might even contribute to cognitive preservation and reduced dementia risk".

When it comes to yours truly, I may therefore be fortuitously lucking out in that department. While referencing the excellent book by Nicolas Carr, The Shallows: What the Internet is Doing to Our Brains, I wrote some time ago about the deleterious effects that using the Internet has had on my ability to read books. (I presume that my placement on the "spectrum" makes me one of the more worst-case scenarios in this regard.) But while circumstances haven't allowed me to address the situation in the slightest, in the meantime it's likely that I've rather awkwardly been handicapping myself by immersing myself in the digital realm of the Internet (as opposed to with books on my bookshelf and in libraries) while maintaining an almost entirely analog workflow.

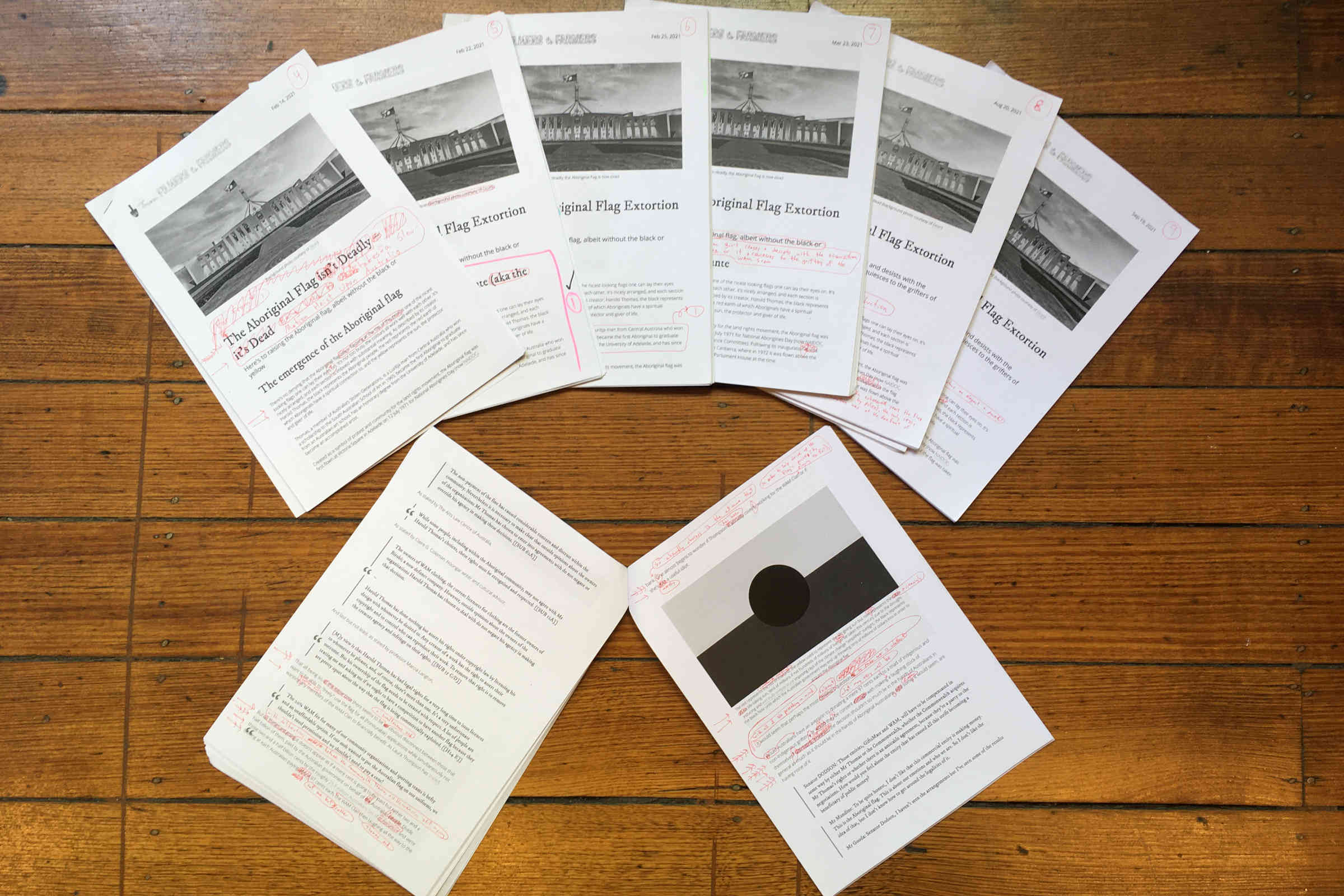

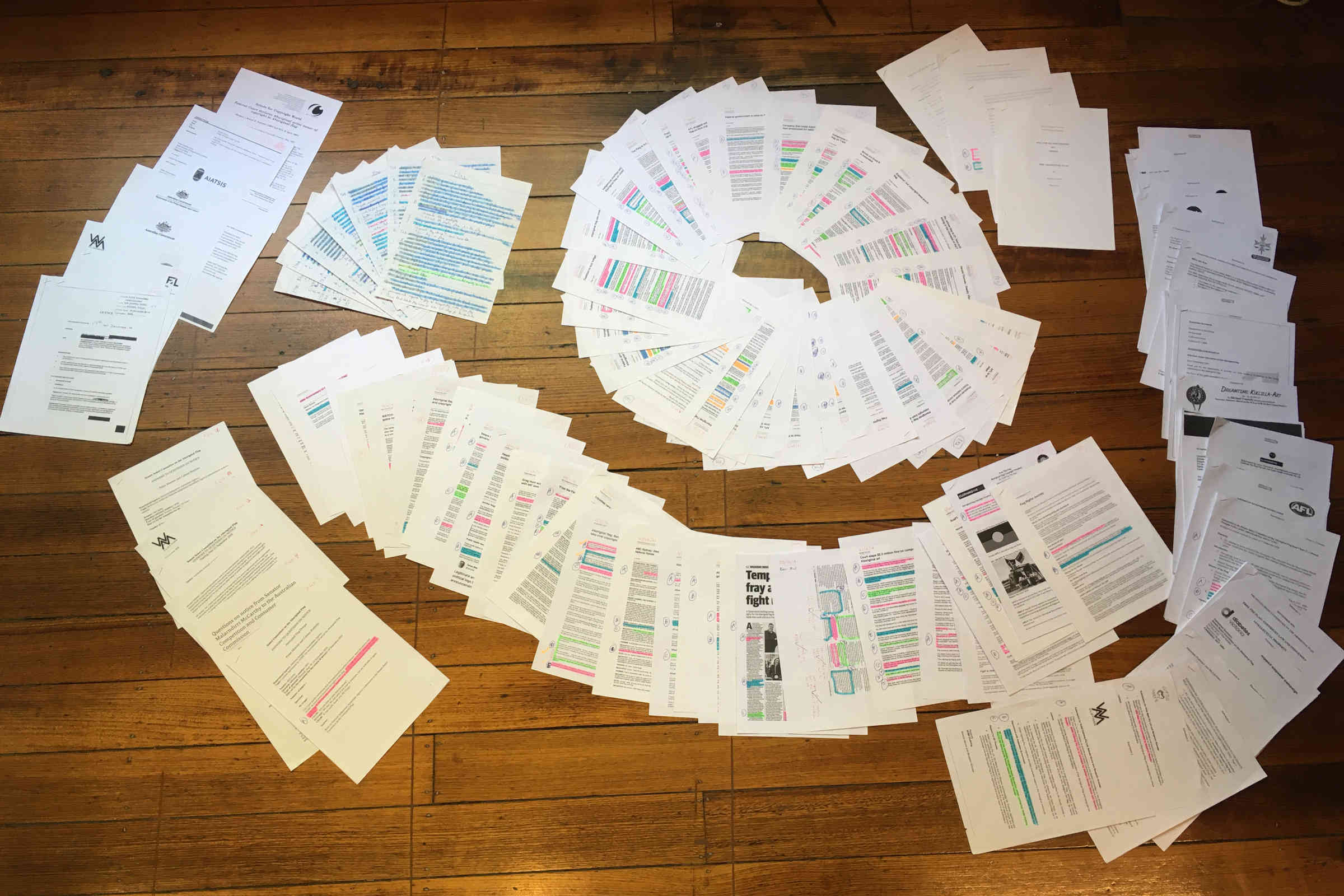

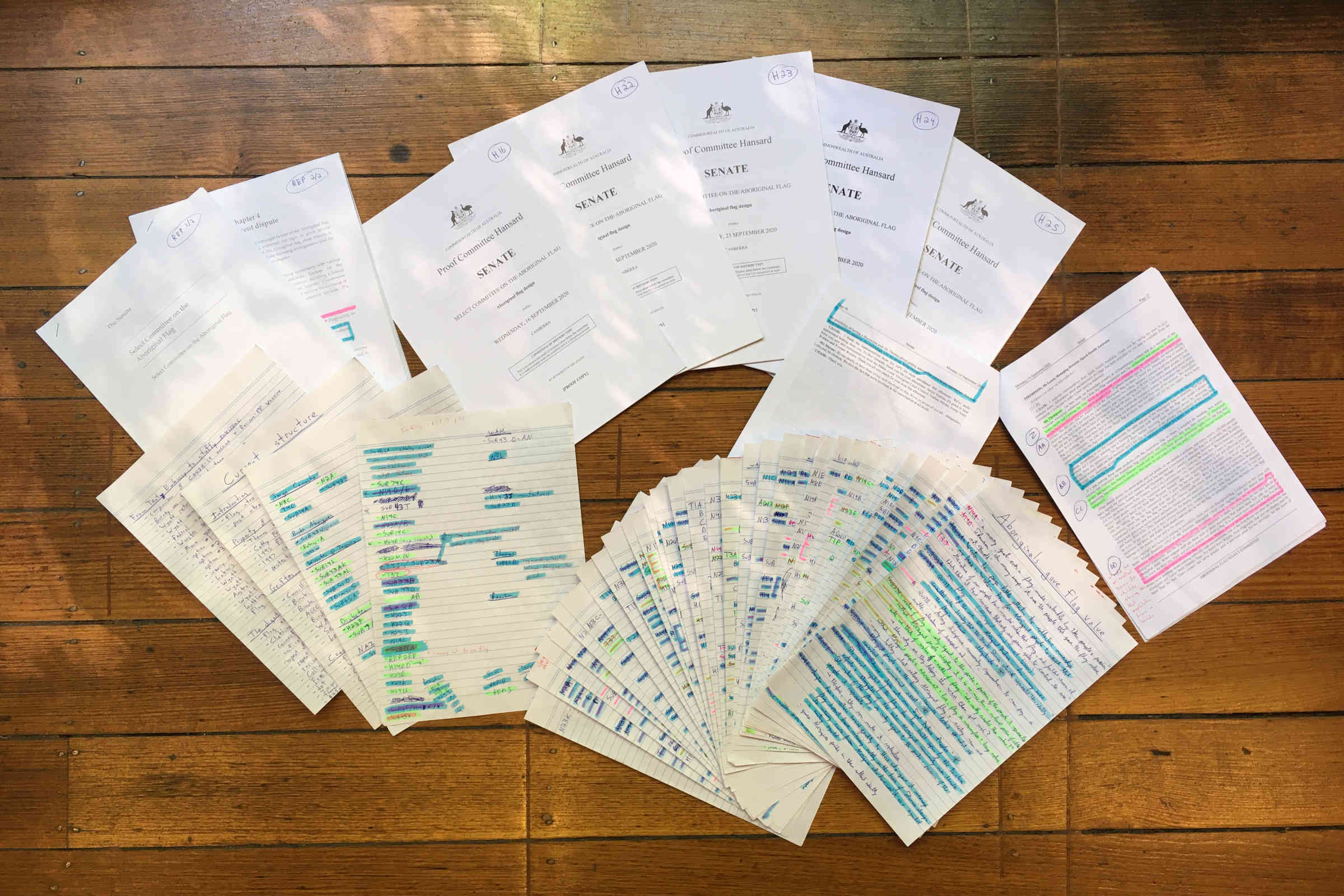

While the above pictures are some of the research material, notes (double-sided) and drafts from FF2F's recent post on the Aboriginal flag, the mostly analog workflow was as follows:

- read Internet article(s), wholly if not just partially for the time-being

- bookmark article(s)

- open article(s) in Firefox's reader view, remove images with webdev tools, and if not directly printing out then save to PDF

- print and number article(s)

- re-read article(s) (in their totality this time), in the process highlighting key passages with varied coloured highlighters

- in the margins add ascending letters to all the highlighted passages and then handwrite a one- or two-line summary of each highlighted passage to its relevant categorised lined sheet of paper, numbering and lettering each individual descriptive note

- write first draft by hand on lined paper, referencing notes from the various categories as need be, which reference the printed/highlighted articles (this also entails highlighting out each key passage with a highlighter as they get used)

- type that hand-written draft into the Ghost editor and then print out that first draft (I've put together a nice print.css file for FF2F's highly customised theme, meaning printouts look great)

- with a fine-tipped red gel pen, write the next draft atop the printout (edits, additions, rearrangements, etc.)

- apply those changes and additions into the editor in Ghost, print out the next draft, then rinse and repeat the process several times as the piece is built out, publishing once a not-too-marked-up iteration is reached

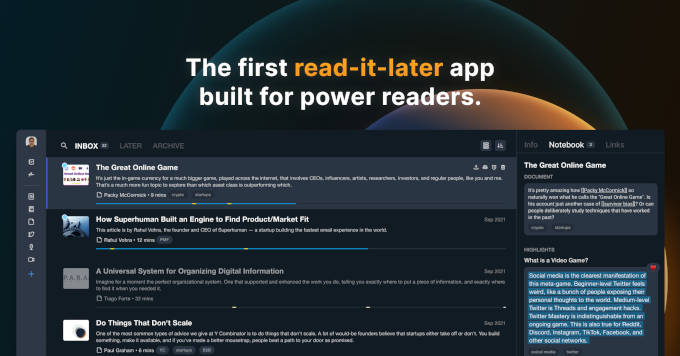

With a not necessarily convoluted but nonetheless extremely laborious and time-consuming workflow that's seriously hampered the ability for yours truly to publish posts while their topic is still "in the news" (I've got way too many posts – many of them quite lengthy – that for various reasons had to be moved on from, but which in a revamped form may re-surface sometime down the road), a few weeks ago I decided to double-down and try to transition to an almost entirely digital workflow, somewhat inspired by a friend that praised and recommended the MarginNote 3 application for allowing him to organise and lay out research for his university papers.

Somewhat befuddled by the praise since upon inspection it turned out that MarginNote 3 is extremely clunky, it was on no less than the very next day after discarding MarginNote 3 that an individual on Ghost's brand-new Discord just happened to mention Readwise as well as its fully overhauled (albeit still in private beta) Readwise Reader.

While the spec images and stated functionality of Reader appear astounding (albeit intended for a different use-case than what I'd be utilising it for), it may very well be able to decrease my workflow from an excruciatingly slow 10-step process to a much quicker 6-step or even 3-step process. But as I'm not a part of the private beta I'll just gave to wait and see. Regardless, here's what the new workflow for constructing FF2F posts could be:

- read article(s), using the Firefox Reader extension to "highlight" key passages and send them to Reader with tag(s) simultaneously (?) applied. (Or articles/papers/PDFs may very be well read in Reader itself, where all the categorising/tagging is simultaneously done in-app.)

- rearrange the order of highlighted passages within each tag (I'm hoping this will be possible)

- write the first draft directly in the Ghost editor, referencing all the categorised highlighted passages on Reader on an adjacent screen

I'll have to see how it all works with me (once I actually get a hold of Reader), as although I may just continuously edit upon the initial draft in the Ghost editor it's possible that I'll stick to repeatedly re-working subsequent drafts on printout after printout. If so, the subsequent steps will be:

- print out that first draft (which may very well have been handwritten)

- make changes with the fine-tipped red gel pen, while referencing Reader on my laptop's screen

- apply those changes upon the draft in Ghost's editor, print that out, rinse and repeat several times

In the meantime I've started using regular Readwise (which was used for the very post you're reading), whose slight clunkyness renders the process a bit convoluted and so still leaves me needing to print out collections of highlighted tags. We'll see how things go once I've secured access to the Reader app itself.

Anyhow, while the possible complete overhaul of my workflow may very well not give me dementia (and may actually assist me in avoiding it), doubling-down (if not tripling-down, even quadrupling-down) on my screen-usage wouldn't exactly be much of an example upon any theoretical tykes of mine who I wanted to help avoiding the stuff – and that's even if I was using those screens solely for research purposes rather than for entertainment purposes (which would generally mean moving images since entertainment of various forms and genres is what they're conducive to and are arguably inherently catered for).

All of which, theoretically at least, places me between a rock and a hard place. That is, how would one reconcile what could very well count as extremely excessive screen usage with the suggestion that children scale back on theirs, children that could easily see no difference between using a screen for watching, say, "educational" programming, and using a screen purely for research/work purposes?

Putting aside the issue of the overwhelming existence of screens at grade schools (a place whose purpose is, arguably, to raise compliant little idiots), the only option seems to be to (a) lead by example and do not use screens for moving images, and then (b) to the extent that it's possible (dependent on their age), be up front with children about the whole thing:

Look kid, industrial civilisation is going to hell in a hand basket and there's a good chance that these screens will make you into a run-of-the-mill, pot-bellied, dumb f**k, and I don't want to have any part assisting you in becoming any of that, particularly in these increasingly trying times. On top of that, if the doo-doo does hit the fan sooner than later, well, that's part of the reason for why we around here have been busy all these years with localising our food production as well as with all the other stuff we do, none of which sitting on your rear like a dumb-ass watching stupid shit is going to help you now or in the future. There's also a good chance that when that doo-doo hits the fan that these screens will become pretty much useless, so in the meantime, and as far as we need to, it's best we judiciously use them for preparing for a time when we'll no longer have them.

Or something along those lines.

Because although lawyer Jes Wasserstein relayed her frustrations of living in a QR-code world in her recent Guardian piece in which she stated that "My life without a smartphone is getting harder and harder", she also closed off the article by adding that "There is no winding back the clock. Clock's aren't wound any more". But such a notion ignores the fact that at some point in the future clocks will no longer work by plugging them into the wall (so to speak), at which point, supposing we still haven't jettisoned the desire to use time pieces anymore (that's a whole other issue), of course we'll be able to wind clocks, and we won't, in fact, have much – if any – other choice.

In the meantime, we here at From Filmers to Farmers will try our best to not get too excited about Readwise's upcoming Reader application and what it might be able to do for this blog's output.

NOTE: This blog does not use affiliate links, so if you choose to sign up to Readwise via the above link (or even to Ghost via the logo/link at the bottom of the screen) nobody at From Filmers to Farmers will actually make any money. Thank you for your understanding.

-

Confucius, quoted in Wendell Berry, The Unsettling of America: Culture and Agriculture (New York, New York: Sierra Books, 1987), p. 38.

-

Joyce Nelson, The Perfect Machine: Television and the Bomb (Philadelphia, PA: New Society Publishers, 1992), pp. 69-70.

![Reasons for Curtailing Our – and Our Childrens' – Screentime [part 2/2]](https://storage.ghost.io/c/d9/c3/d9c3ae66-3c63-49da-b4d3-18e56974e2b1/content/images/2021/11/boy-watching-TV.jpg)

![By Limiting Online Gaming China's Government Has Become as Heavy-Handed as My Parents Were [part 1/2]](https://storage.ghost.io/c/d9/c3/d9c3ae66-3c63-49da-b4d3-18e56974e2b1/content/images/size/w680/2021/10/online-gaming.jpg)

Comments